Behavior Cloning (BC) has emerged as a highly

effective paradigm for robot learning. However, BC lacks a self-

guided mechanism for online improvement after demonstrations

have been collected. Existing offline-to-online learning methods

often cause policies to replace previously learned good actions

due to a ditribution mismatch between offline data and online

learning.

In this work, we propose Q2RL, Q-Estimation and

Q-Gating from BC for Reinforcement Learning, an algorithm for

efficient offline-to-online learning. Our method consists of two

parts: (1) Q-Estimation extracts a Q function from a BC policy

using a few interaction steps with the environment, followed by

online RL with (2) Q-Gating, which switches between BC and

RL policy actions based on their respective Q values to collect

samples for RL policy training.

Across manipulation tasks from

D4RL and robomimic benchmarks, Q2RL outperforms SOTA

offline-to-online learning baselines on success rate and time to

convergence. Q2RL is efficient enough to be applied in an on-

robot RL setting, learning robust policies for contact-rich and

high precision manipulation tasks such as pipe assembly and

kitting, in 1-2 hours of online interaction, achieving success rates

of up to 100% and up to 3.75x improvement against the original

BC policy.

We estimate the Q-function of the pretrained BC policy by assuming its action distribution approximates a Boltzmann distribution. Using only the BC policy's action log-probabilities and entropy (no training data required) we derive:

The value function $V_{BC}$ is estimated via Monte Carlo returns from a small number of initial rollouts.

During online RL, Q-Gating maintains two Q-functions: a frozen $\hat{Q}_{BC}$ (preserving BC performance) and a trainable $Q_{RL}$ (enabling improvement). At each step, the action with the higher Q-value is executed:

This mechanism prevents catastrophic forgetting of good BC actions while allowing the RL policy to explore and improve in states where BC is suboptimal. An auxiliary BC loss further stabilizes training for safe on-robot deployment.

Q2RL consists of Q-Estimation and Q-Gating. Q-Estimation extracts a Q function from a BC Policy using its value function, action log-probabilities, and entropy. During RL policy training, Q-Gating selects and executes the BC or RL action with highest respective Q value, updating the RL policy on the collected interactions.

Q2RL uses BC actions for behaviors common across task settings, such as moving between bins.

RL takes over for grasping and placement when parts are in new locations outside the BC training distribution.

Q2RL does not require access to the BC training demonstrations during the online RL phase.

We evaluate Q2RL on a Franka Panda arm with a Robotiq 2F-85 gripper, using workspace and wrist RGB cameras. All tasks involve contact-rich, high-precision manipulation.

We compare Q2RL against IBRL, a SOTA BC-to-RL method.

All videos are shown at 1x speed.

Prior SOTA methods such as IBRL fail entirely on this longer-horizon, contact-rich task. Q2RL learns to grasp, align, and insert within 2.5 hours.

All videos are shown at 1x speed.

The BC policy for Kitting was trained with only a single object in each bin. Q2RL adapts to the modified setting with two objects per bin - a task distribution the BC policy was never trained on.

BC policy achieves 95% success on Kitting-Original, but only 35% success on Kitting-Modified (two objects per bin). Q2RL recovers to 70% success on the harder modified task, adapting to the distribution shift through online RL.

All videos are shown at 1x speed.

Q2RL strategically switches to RL actions to recover from common BC failure modes.

A core challenge in real-world RL is aggressive exploration that causes unsafe robot behavior. Q2RL enables safer exploration from the start by grounding policy improvement in estimated BC Q-values.

Timelapse of learning pipe assembly using Q2RL, recorded every ten episodes (approx. 2hrs)

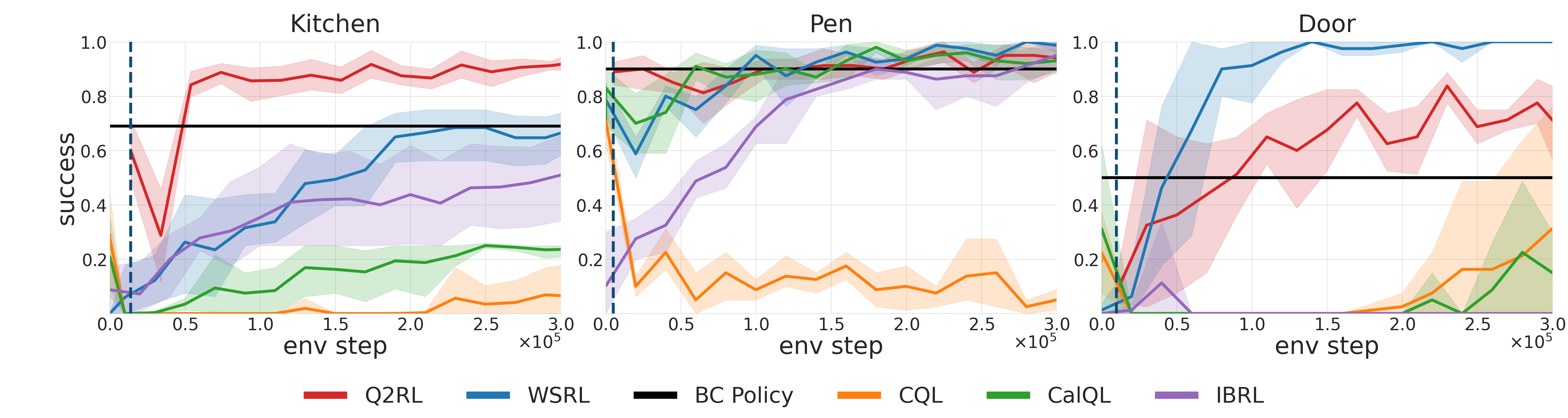

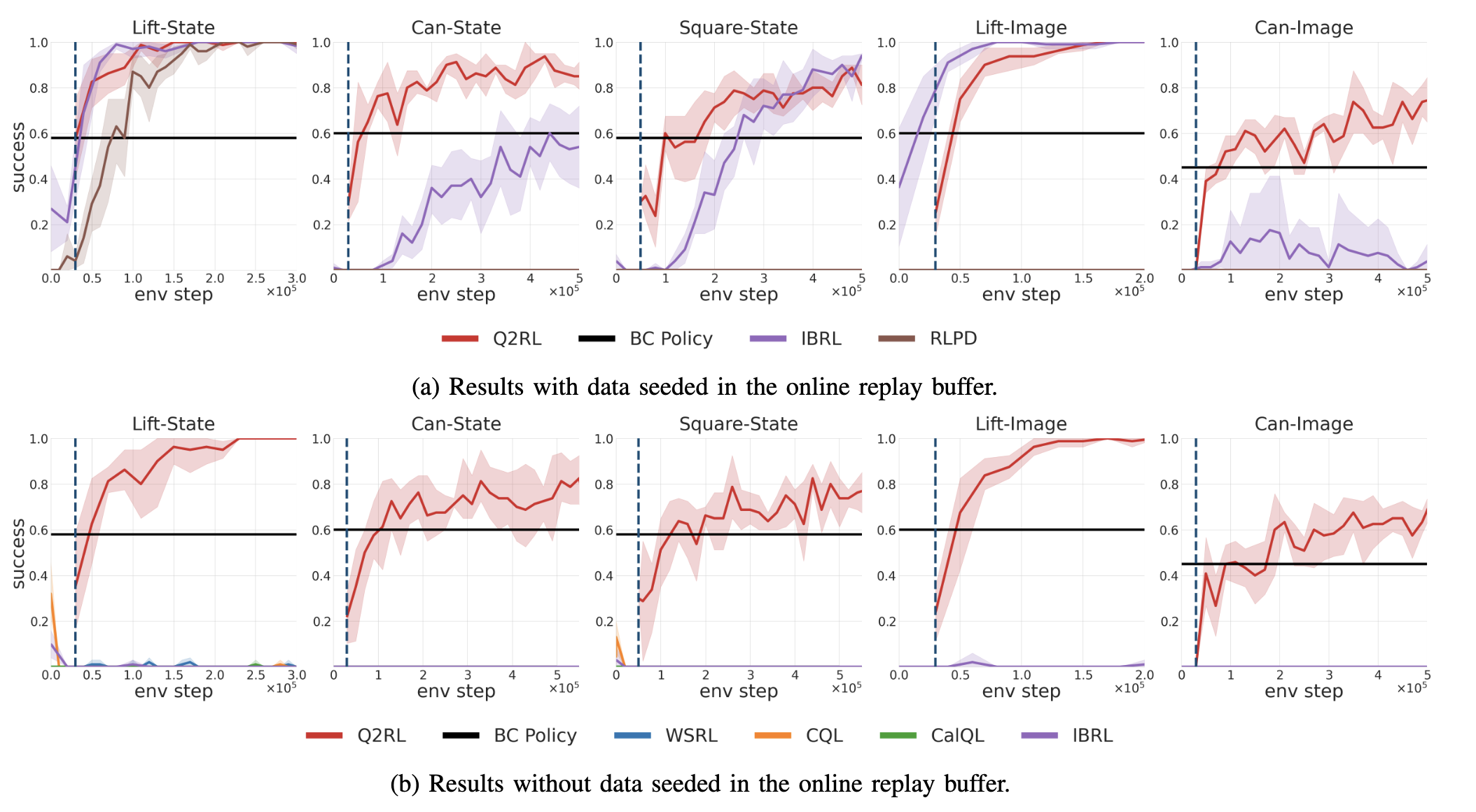

Q2RL outperforms SOTA offline-to-online baselines on D4RL and robomimic benchmarks across state-based and image-based observations.

Fig. 3: Results on D4RL (Kitchen, Pen, Door). Q2RL starts with strong initial performance from BC and continuously improves, outperforming WSRL, CalQL, CQL, and IBRL baselines.

Fig. 4: Results on robomimic (Lift, Can, Square) — both with and without access to offline training data. Q2RL is the only method that reliably learns without offline data, while remaining competitive with data access.

If you find Q2RL useful in your research, please cite our paper:

@inproceedings{dodeja2026q2rl, title = {When Life Gives You BC, Make Q-functions: Extracting Q-values from Behavior Cloning for On-Robot Reinforcement Learning}, author = {Dodeja, Lakshita and Biza, Ondrej and Vats, Shivam and Hart, Stephen and Tellex, Stefanie and Walters, Robin and Schmeckpeper, Karl and Weng, Thomas}, booktitle = {Robotics: Science and Systems (RSS)}, year = {2026}, }